NVIDIA AI Platform Smashes Every MLPerf Category, from Data Centre to Edge

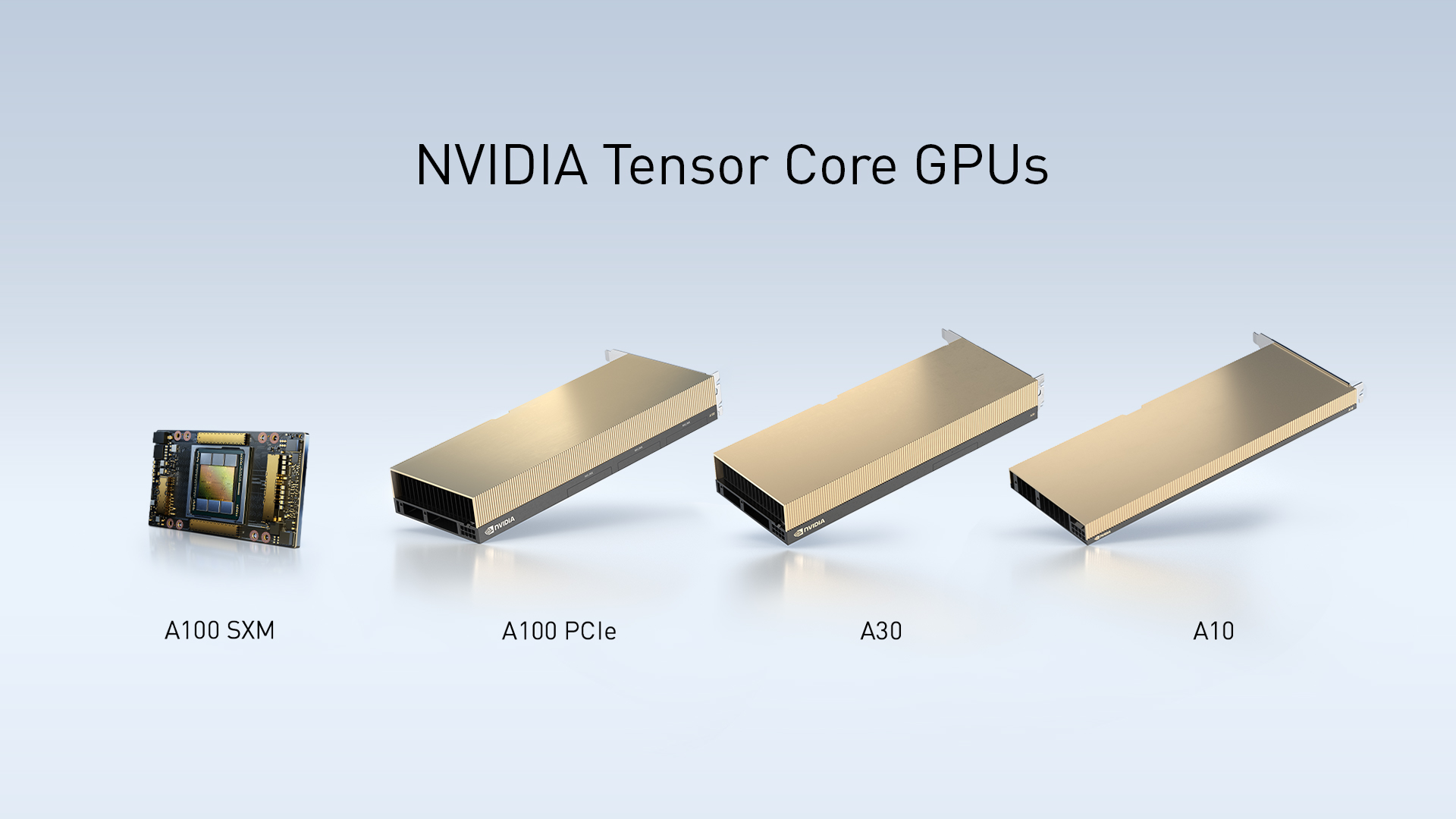

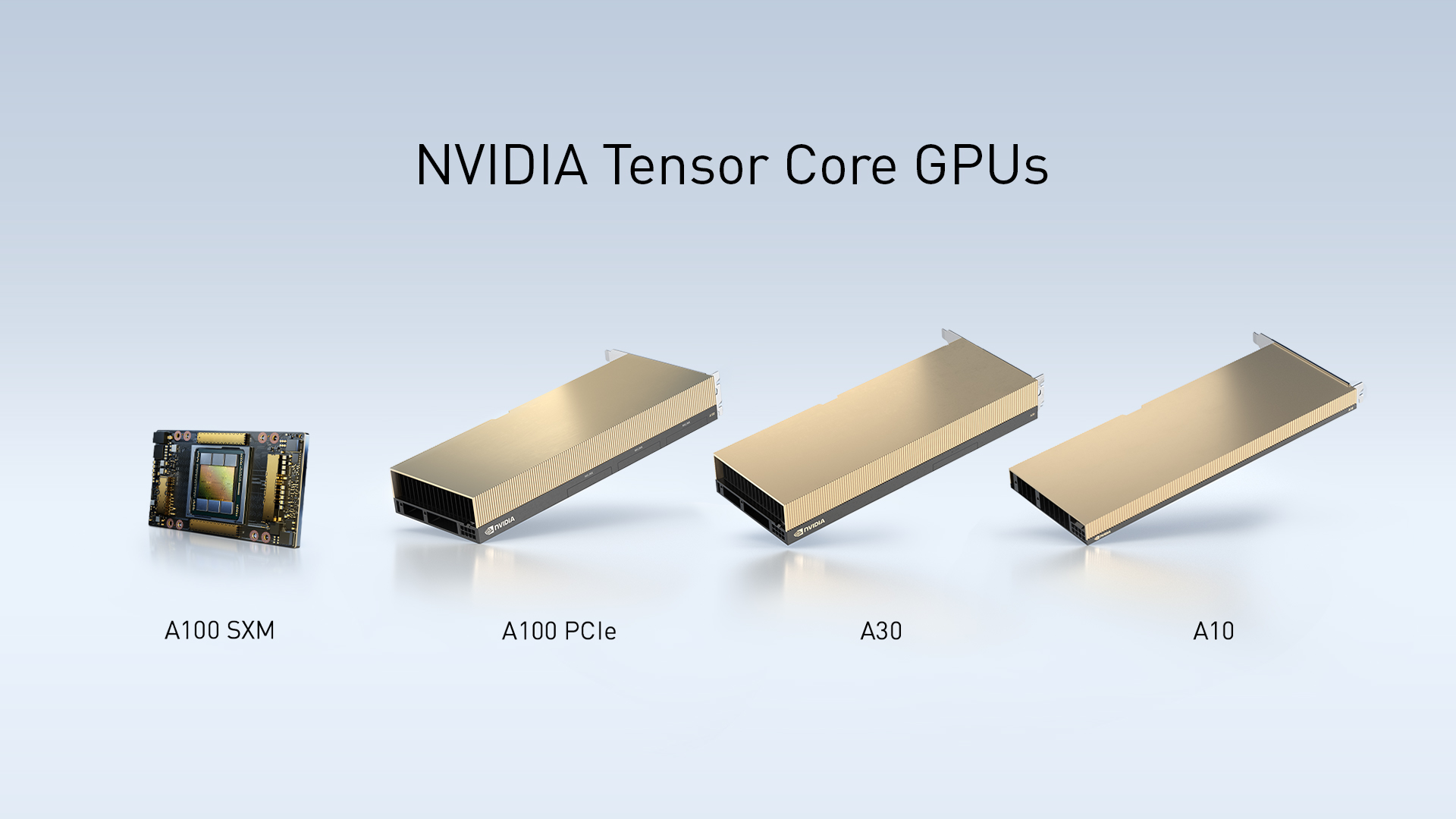

SINGAPORE—April 22, 2021—NVIDIA today announced that its AI inference platform, newly expanded with NVIDIA® A30 and A10 GPUs for mainstream servers, has achieved record-setting performance across every category on the latest release of MLPerf.

MLPerf is the industry’s established benchmark for measuring AI performance across a range of workloads spanning computer vision, medical imaging, recommender systems, speech recognition and natural language processing.

Debuting on MLPerf, NVIDIA A30 and A10 GPUs combine high performance with low power consumption to provide enterprises with mainstream options for a broad range of AI inference, training, graphics and traditional enterprise compute workloads. Cisco, Dell Technologies, Hewlett Packard Enterprise, Inspur and Lenovo are expected to integrate the GPUs into their highest volume servers starting this summer.

NVIDIA achieved these results taking advantage of the full breadth of the NVIDIA AI platform – encompassing a wide range of GPUs and AI software, including TensorRT™ and NVIDIA Triton™

Inference Server – which is deployed by leading enterprises, such as Microsoft, Pinterest, Postmates, T-Mobile, USPS and WeChat.

“As AI continues to transform every industry, MLPerf is becoming an even more important tool for companies to make informed decisions on their IT infrastructure investments,” said Ian Buck, general manager and vice president of Accelerated Computing at NVIDIA. “Now, with every major OEM submitting MLPerf results, NVIDIA and our partners are focusing not only on delivering world-leading performance for AI, but on democratising AI with a coming wave of enterprise servers powered by our new A30 and A10 GPUs.”

Several submissions also use Triton Inference Server, which simplifies the complexity of deploying AI in applications by supporting models from all major frameworks, running on GPUs, as well as CPUs, and optimising for different query types including batch, real-time and streaming. Triton submissions achieved performance close to that of the most optimised GPU implementations, as well as CPU implementations, with comparable configurations.

NVIDIA also broke new ground with its submissions using the NVIDIA Ampere architecture’s

Multi-Instance GPU capability by simultaneously running all seven MLPerf Offline tests on a single GPU using seven MIG instances. The configuration showed nearly identical performance compared with a single MIG instance running alone.

These submissions demonstrate MIG’s performance and versatility, which enable infrastructure managers to provision right-sized amounts of GPU compute for specific applications to get maximum output from every data centre GPU.

In addition to NVIDIA’s own submissions, NVIDIA partners Alibaba Cloud, Dell Technologies, Fujitsu, GIGABYTE, HPE, Inspur, Lenovo and Supermicro submitted a total of over 360 results using NVIDIA GPUs.

The A30 delivers versatile performance for industry-standard servers, supporting a broad range of AI inference and mainstream enterprise compute workloads, such as recommender systems, conversational AI and computer vision.

The NVIDIA A10 GPU accelerates deep learning inference, interactive rendering, computer-aided design and cloud gaming, enabling enterprises to support mixed AI and graphics workloads on a common infrastructure. Using NVIDIA virtual GPU software, management can be streamlined to improve the utilisation and provisioning of virtual desktops used by designers, engineers, artists and scientists.

The NVIDIA Jetson platform, based on the NVIDIA Xavier™ system-on-module, provides serverclass AI performance at the edge, enabling a wide variety of applications in robotics, healthcare, retail and smart cities. Built on NVIDIA’s unified architecture and the CUDA-X™ software stack, Jetson is the only platform capable of running all the edge workloads in compact designs while consuming less than 30W of power.

SINGAPORE—April 22, 2021—NVIDIA today announced that its AI inference platform, newly expanded with NVIDIA® A30 and A10 GPUs for mainstream servers, has achieved record-setting performance across every category on the latest release of MLPerf.

Debuting on MLPerf, NVIDIA A30 and A10 GPUs combine high performance with low power consumption to provide enterprises with mainstream options for a broad range of AI inference, training, graphics and traditional enterprise compute workloads. Cisco, Dell Technologies, Hewlett Packard Enterprise, Inspur and Lenovo are expected to integrate the GPUs into their highest volume servers starting this summer.

NVIDIA achieved these results taking advantage of the full breadth of the NVIDIA AI platform – encompassing a wide range of GPUs and AI software, including TensorRT™ and NVIDIA Triton™

Inference Server – which is deployed by leading enterprises, such as Microsoft, Pinterest, Postmates, T-Mobile, USPS and WeChat.

“As AI continues to transform every industry, MLPerf is becoming an even more important tool for companies to make informed decisions on their IT infrastructure investments,” said Ian Buck, general manager and vice president of Accelerated Computing at NVIDIA. “Now, with every major OEM submitting MLPerf results, NVIDIA and our partners are focusing not only on delivering world-leading performance for AI, but on democratising AI with a coming wave of enterprise servers powered by our new A30 and A10 GPUs.”

MLPerf Results

NVIDIA is the only company to submit results for every test in the data centre and edge categories, delivering top performance results across all MLPerf workloads.Several submissions also use Triton Inference Server, which simplifies the complexity of deploying AI in applications by supporting models from all major frameworks, running on GPUs, as well as CPUs, and optimising for different query types including batch, real-time and streaming. Triton submissions achieved performance close to that of the most optimised GPU implementations, as well as CPU implementations, with comparable configurations.

NVIDIA also broke new ground with its submissions using the NVIDIA Ampere architecture’s

Multi-Instance GPU capability by simultaneously running all seven MLPerf Offline tests on a single GPU using seven MIG instances. The configuration showed nearly identical performance compared with a single MIG instance running alone.

These submissions demonstrate MIG’s performance and versatility, which enable infrastructure managers to provision right-sized amounts of GPU compute for specific applications to get maximum output from every data centre GPU.

In addition to NVIDIA’s own submissions, NVIDIA partners Alibaba Cloud, Dell Technologies, Fujitsu, GIGABYTE, HPE, Inspur, Lenovo and Supermicro submitted a total of over 360 results using NVIDIA GPUs.

NVIDIA’s Expanding AI Platform

The NVIDIA A30 and A10 GPUs are the latest additions to the NVIDIA AI platform, which includes NVIDIA Ampere architecture GPUs, NVIDIA Jetson AGX Xavier™ and Jetson Xavier NX, and a full stack of NVIDIA software optimised for accelerating AI.The A30 delivers versatile performance for industry-standard servers, supporting a broad range of AI inference and mainstream enterprise compute workloads, such as recommender systems, conversational AI and computer vision.

The NVIDIA A10 GPU accelerates deep learning inference, interactive rendering, computer-aided design and cloud gaming, enabling enterprises to support mixed AI and graphics workloads on a common infrastructure. Using NVIDIA virtual GPU software, management can be streamlined to improve the utilisation and provisioning of virtual desktops used by designers, engineers, artists and scientists.

The NVIDIA Jetson platform, based on the NVIDIA Xavier™ system-on-module, provides serverclass AI performance at the edge, enabling a wide variety of applications in robotics, healthcare, retail and smart cities. Built on NVIDIA’s unified architecture and the CUDA-X™ software stack, Jetson is the only platform capable of running all the edge workloads in compact designs while consuming less than 30W of power.

BÀI MỚI ĐANG THẢO LUẬN